| Method | Org. Form | Repr. Type | Agg. Depth | Learning |

|---|---|---|---|---|

| Flat Aggregation | Flat | Text | 0 | Training-free |

| Embedding-Based GNN | Structured | Embedding | Multi-hop | Training-free |

| Text-Based GNN | Structured | Text | Multi-hop | Training-free |

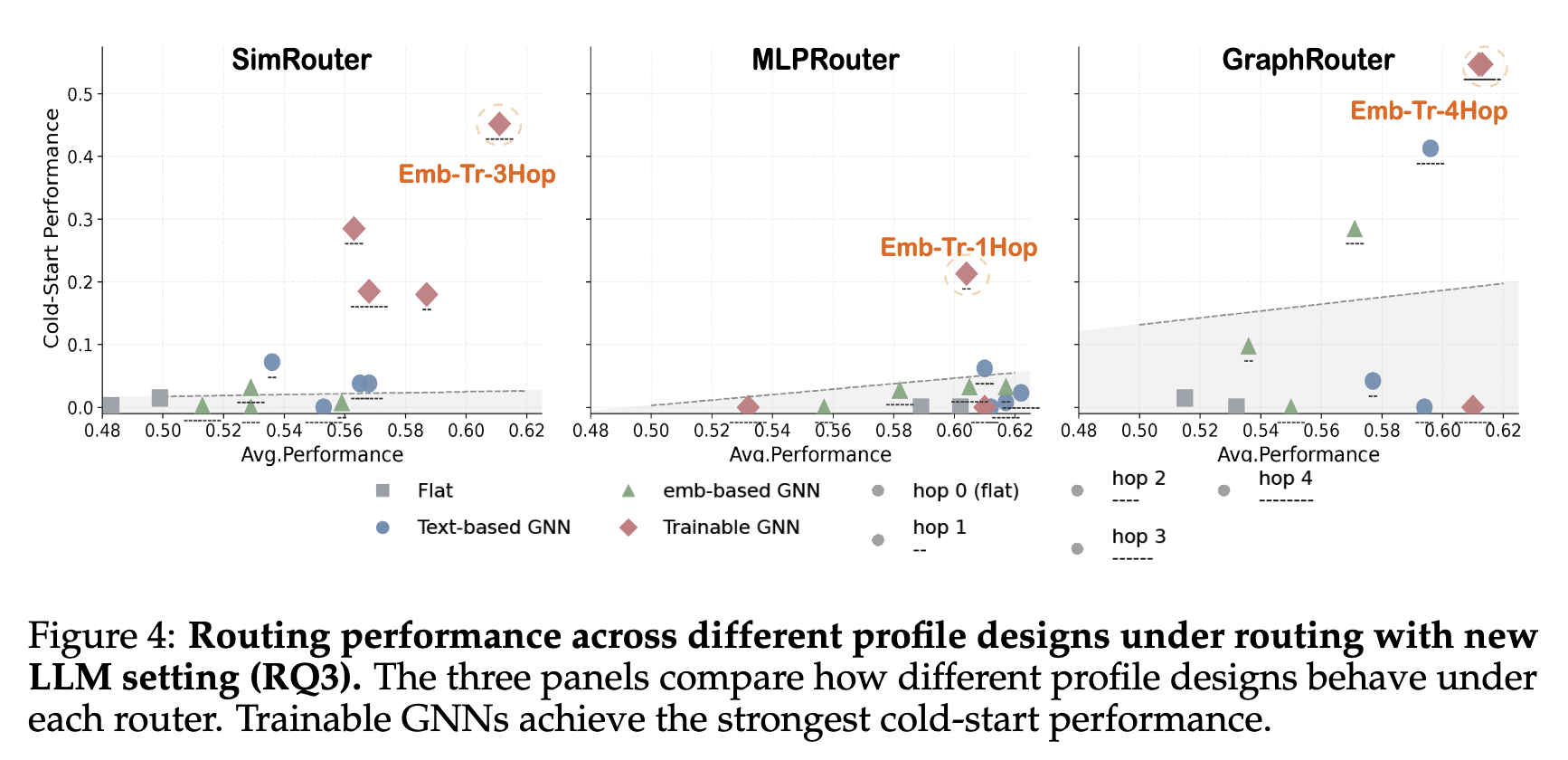

| Trainable GNN | Structured | Embedding | Multi-hop | Trainable |

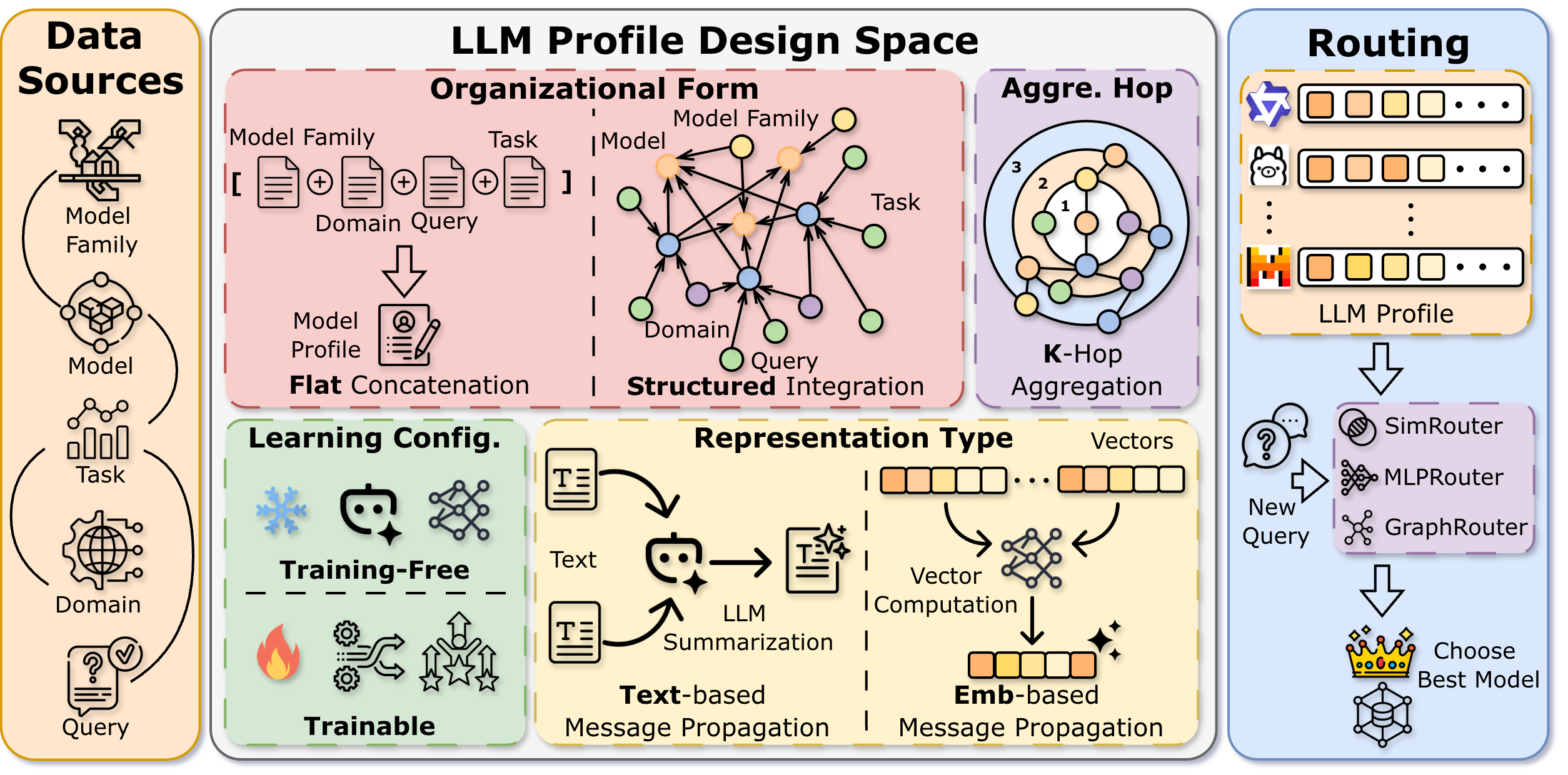

RouteProfile Design Space

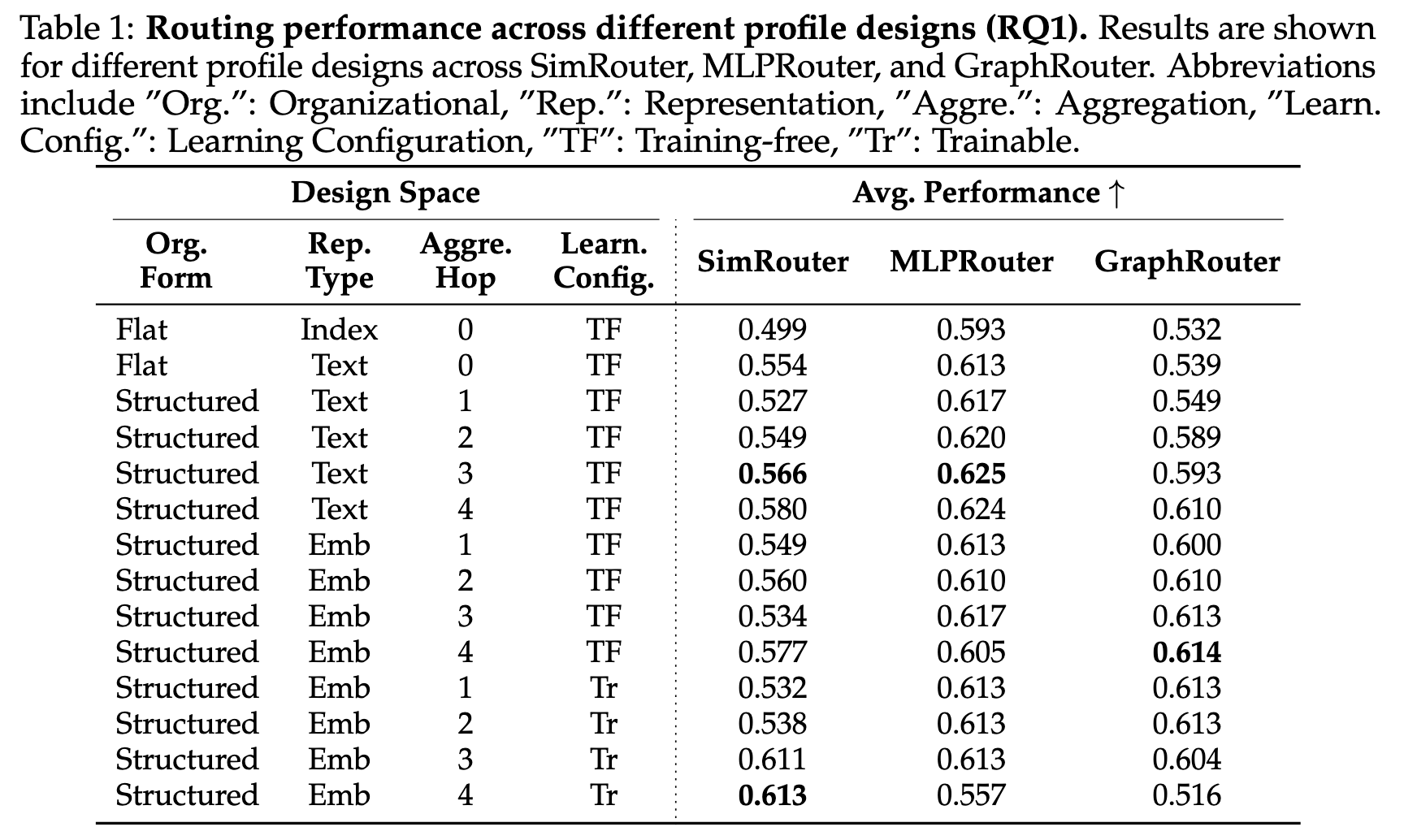

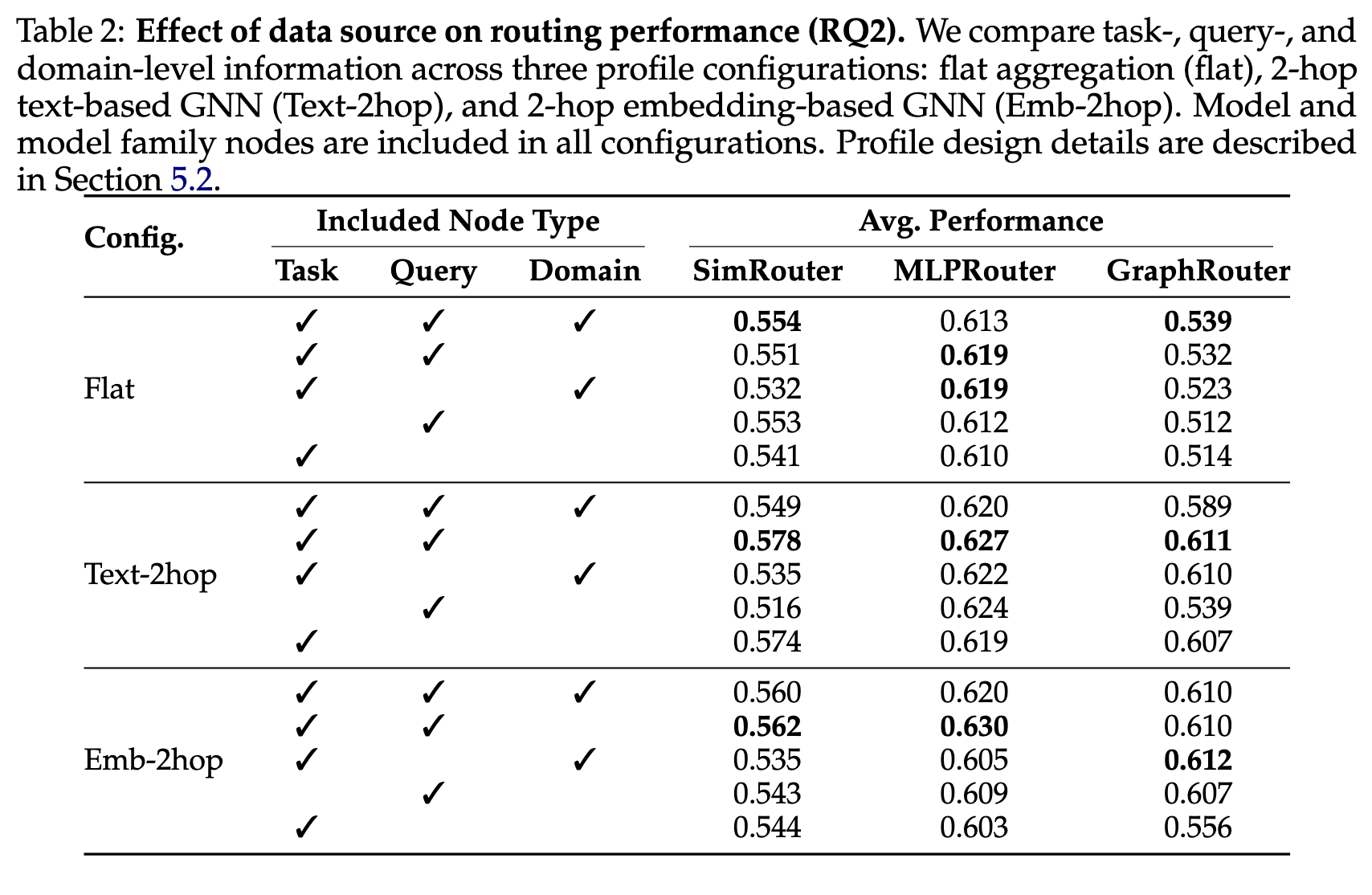

We view LLM profiling as a structured information integration problem over heterogeneous interaction histories spanning model family metadata, domain coverage, benchmark evaluations, and query-level instances. RouteProfile characterizes the design space of LLM profiles along four key dimensions:

Organizational Form specifies how interaction histories are organized before integration. In a flat form, available information is directly concatenated into plain text or a single vector. In a structured form, relational information among models, tasks, domains, and queries is explicitly modeled through a graph, enabling richer relational reasoning.

Representation Type determines the information fusion mechanism. Text representations are produced by prompting an LLM to summarize neighborhood information into natural language descriptions. Embedding representations are dense vectors computed through neural message passing, capturing fine-grained semantic signals.

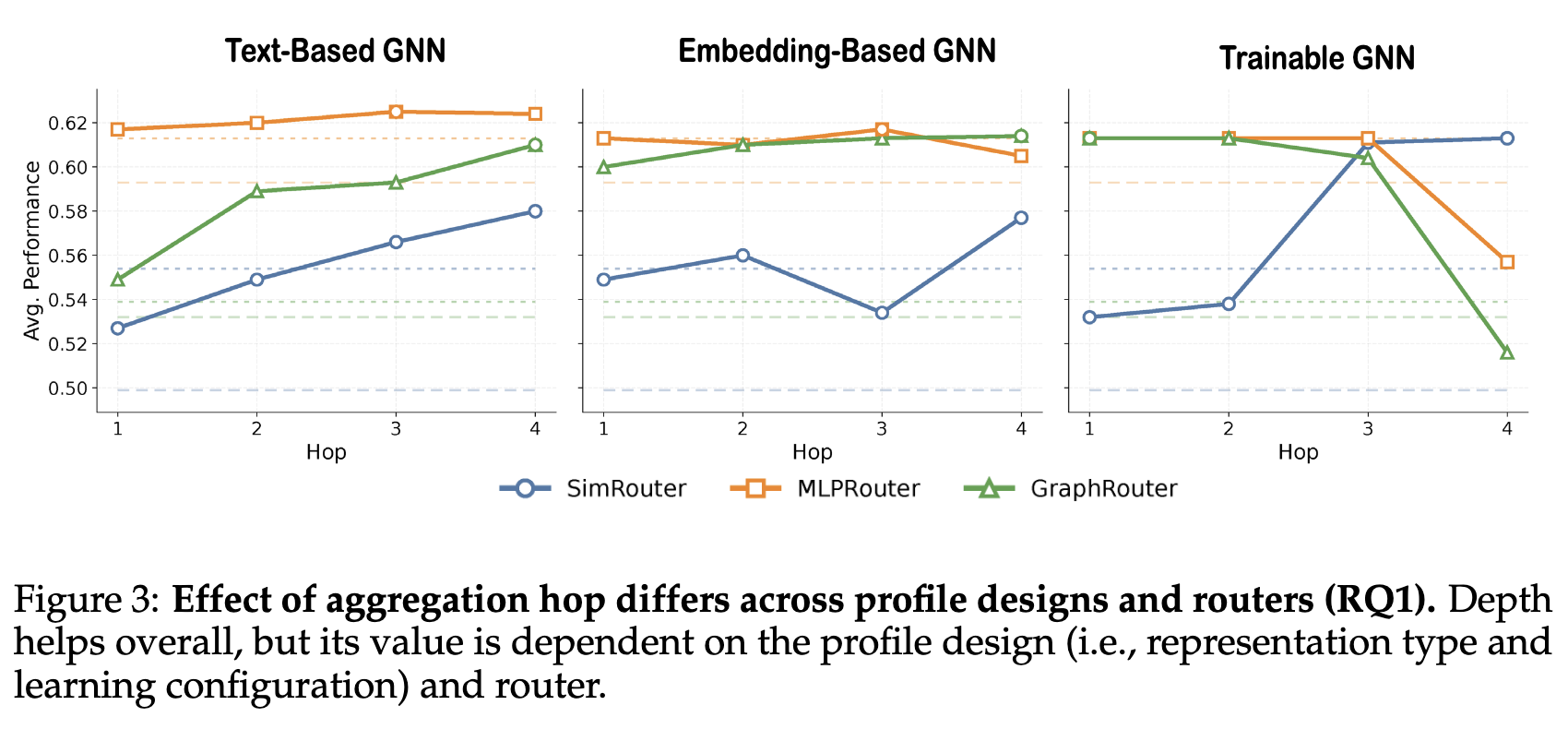

Aggregation Depth controls the scope of information integration within the graph, ranging from local (0-hop, no neighborhood) to multi-hop aggregation that incorporates higher-order context from the interaction graph.

Learning Configuration indicates whether the profiling process is training-free or trainable. In a trainable setting, the aggregation function can be optimized via self-supervised masked reconstruction on the interaction graph, learning to produce more discriminative model representations.

Overview of the RouteProfile design space. LLM profiles are constructed from interaction histories along four dimensions: organizational form, representation type, aggregation depth, and learning configuration.